research

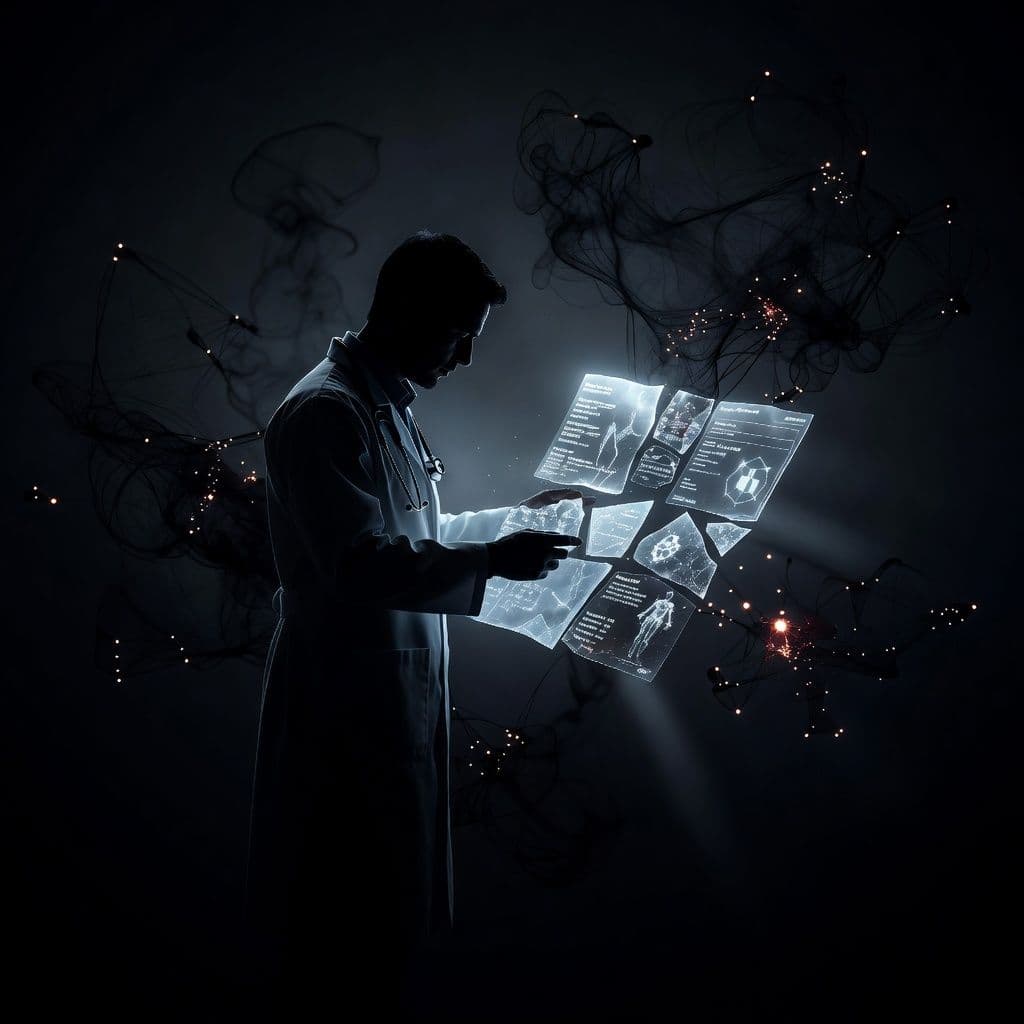

New Benchmark Tests AI's Ability to Design Real Drugs

Researchers created a new test to see if AI can handle real-world drug design. This could change how we discover life-saving medications.

The test, called SMDD-Bench, is the first to evaluate AI's ability to design drugs for real-world use. It focuses on small molecule drug design, a key area in medicine.

The SMDD-Bench is a challenging, multi-turn, long-horizon agentic benchmark consisting of 502 tasks. It covers diverse chemistries and targets, making it a comprehensive test for AI's capabilities in drug design.

This benchmark is designed to be more realistic than previous tests. It includes multi-turn interactions, which mimic the real-world process of drug design. This makes it a valuable tool for evaluating AI's potential in this field.

via ArXiv cs.AI#ai#drug-design#research